A sudden traffic drop in Google Search Console can feel alarming, but it often has a clear cause. With a simple step-by-step review, you can tell whether the problem is a real ranking loss, a reporting delay, seasonal demand, or a technical block.

That matters because the fix depends on what changed. A page may have lost visibility, a query may have slipped, or a noindex tag, crawl error, or mobile issue may be holding traffic back. The Google Search Console fixes for sudden traffic loss start with the right checks, so you don’t waste time guessing.

Once you know where the drop began, the next steps get much easier.

Start with the right data so you do not chase the wrong problem

Before you change anything, confirm that the drop is real and locate where it started. Search Console gives you the clues, but only if you compare the right dates and break the data into the right pieces. If you skip that step, you can end up fixing the wrong page, the wrong query, or the wrong device.

Compare before and after time periods the smart way

Open the performance report first. Then choose a clean date range and compare the week or month before the drop with the period after it. A steady date range makes the trend easier to trust, and it keeps one odd day from clouding the picture.

The exact start date matters more than most people think. If traffic fell on Tuesday, compare the seven or 28 days before Tuesday with the same length after Tuesday. That helps you see whether the decline began all at once or faded in slowly. A sharp break often points to a technical issue or a major search change. A slow slide usually points to content, intent, or competition.

Google’s own traffic drop guidance recommends checking impressions, clicks, average position, and CTR together. That mix tells a fuller story than clicks alone.

A quick read of the chart can help you narrow the cause:

- Clicks down, impressions steady often means the page still appears, but fewer people click it.

- Clicks and impressions both down usually points to lost visibility.

- Average position worse suggests ranking pressure or a technical problem.

- CTR down with stable impressions can point to snippet changes or search results that draw attention away.

Compare the same length of time on both sides of the drop. Uneven ranges create fake patterns.

Separate branded traffic from non-branded traffic

Next, split branded and non-branded searches. If branded traffic falls, the problem may be outside SEO, such as weaker brand demand, fewer returning users, or a broader marketing issue. If people stop searching for your name, Search Console is showing a symptom, not the root cause.

Non-branded traffic tells a different story. When those queries fall, the issue is often tied to rankings, content quality, search intent, or a technical block. That is where most Google Search Console fixes for sudden traffic loss begin, because generic terms are usually the first place ranking trouble shows up.

Use simple labels when you review queries:

- Branded: searches that include your company, product, or site name.

- Non-branded: searches that describe a topic, problem, or service without your name.

If branded terms hold steady while non-branded terms drop, the site itself may still have healthy demand. In that case, the loss often sits in pages, queries, or search intent shifts rather than in your brand.

Check whether one page, one section, or the whole site is affected

Now look at scope. A sitewide drop usually points to a technical issue, a site-level quality problem, or an algorithm shift. A drop limited to a few pages often points to thin content, indexing trouble, weak internal links, or a page that no longer matches search intent.

Start with the pages that lost the most clicks. That saves time and keeps the diagnosis grounded. Once you find the biggest losers, move into the query view for those pages and see which search terms fell with them.

A simple way to sort the problem is to ask three questions:

- Did traffic drop across the whole site?

- Did only a few pages fall hard?

- Did one device, country, or query group take the hit?

That breakdown matters because each pattern points in a different direction. A sitewide loss can fit a crawl, indexing, or algorithm issue. A page-level loss can point to content decay or internal linking gaps. A country or device drop can reveal a local ranking shift, a mobile issue, or a market-specific change.

If the Search Console numbers still feel unclear, check GA4 beside them. GA4 helps confirm whether the drop is real across site visits or whether Search Console is showing a reporting shift. When both tools move in the same direction, you have a much stronger signal. If they disagree, the problem may sit in the reporting layer rather than the site itself.

At this stage, the goal is simple: identify where the loss lives before you touch anything. Once you know whether the drop is broad or narrow, branded or non-branded, and tied to one page or the whole site, the next fix becomes much easier to choose.

Look for technical blocks that stop Google from crawling or indexing pages

When traffic falls fast, technical blocks are often the first thing to check. They can hide pages from Google, confuse crawl paths, or stop a page from being indexed at all.

This is where the most practical Google Search Console fixes for sudden traffic loss start to pay off. If a page is blocked, broken, or removed from discovery paths, rankings can vanish even when the content itself looks fine.

Use the Pages report to find indexing errors and exclusions

Open the Pages report in Search Console and review the split between Indexed, Not indexed, Crawled, and Discovered pages. If the indexed count drops while excluded pages rise, you may be looking at a technical block, not a content problem.

The table below the chart matters just as much as the graph. It shows why Google skipped a URL, which makes it easier to spot patterns like noindex, crawl errors, duplicate pages, or redirects. A spike in exclusions after a site change is a strong warning sign.

Pay close attention to these shifts:

- Indexed pages falling means Google is losing access or trust in parts of the site.

- Not indexed pages rising often points to blocked, duplicate, or low-value URLs.

- Crawled pages without indexing can mean Google saw the page but chose not to keep it.

- Discovered pages not crawled often suggests crawl budget problems or poor internal links.

A sudden rise in excluded pages is rarely random. It usually follows a site update, template change, plugin issue, or migration problem.

You can also compare the issue report with the date your traffic dropped. If the timing matches, you have a solid lead. Google’s single-page troubleshooting guidance explains how crawl and indexing signals appear for individual URLs, which makes it easier to connect the report to the problem.

Inspect important URLs one by one

The URL Inspection tool shows what Google can do with a specific page. Use it on your most important landing pages first, especially the ones that lost traffic the hardest.

Look for three things: whether Google can crawl the page, whether indexing is allowed, and whether the rendered page looks correct. If any of those checks fails, the page may disappear from search or rank far lower than before.

A live test is especially useful after a fix. It tells you what Google sees right now, not just what it saw last time it visited. Once the problem is fixed, request indexing so Google can recheck the page sooner.

Use this order:

- Enter the exact URL.

- Review the current and live results.

- Check crawl and index status.

- Fix the issue.

- Request indexing after the page passes the live test.

That workflow saves time because it separates old data from current behavior. If the live test still shows a block, keep debugging before asking Google to try again.

Review robots.txt, noindex tags, canonicals, and redirects

These four settings can block or confuse Google in different ways, and they often break after a recent change.

- robots.txt can prevent Google from crawling a page at all. A bad rule can block entire folders.

- noindex tags tell Google not to keep a page in the index, even if it can crawl the page.

- canonicals tell Google which version of a page is preferred. A wrong canonical can send ranking signals to the wrong URL.

- redirects move users and bots to another page. A broken chain, loop, or wrong destination can kill visibility.

Check whether these settings changed during a CMS update, plugin install, redesign, or migration. That is where many sudden drops begin. A safe-looking edit can silently tell Google to ignore pages you need indexed.

Check server health, speed, and mobile usability

If Google runs into slow responses or downtime, it may crawl less often. That can delay new pages, slow down updates, and make rankings wobble. Repeated 5xx errors are even worse because they signal that the server is unstable.

Mobile usability matters too. If a page loads badly on phones, Google may rank it lower or crawl it less efficiently. Poor layout, blocked content, and hard-to-tap elements all make the page harder to trust.

Core Web Vitals help here because they show whether the page feels fast and stable. Keep the review simple:

- Check whether the server responds quickly.

- Look for downtime or repeated error spikes.

- Test key pages on mobile.

- Review Core Web Vitals for problem templates.

If a page is slow, Google may still crawl it, but not as often. If it fails often, crawling can drop fast, and that can look like traffic loss before the ranking losses catch up.

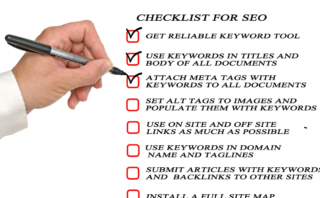

Find content problems that make Google lower your pages

When traffic drops after an update, the page itself is often the problem. Google tends to reward pages that are clearer, more complete, and more useful than the rest.

A drop does not always mean a penalty. More often, it means your page now looks weaker next to better results, especially after a core update or a strong competitor refresh. That is why the next step in Google Search Console fixes for sudden traffic loss is a content audit, not a guess.

Spot pages that lost rankings after a core update

Core updates often lift pages that answer the search better. They also push down pages that feel thin, vague, or hard to trust. If a page dropped after an update, compare it with the current top results before you change anything.

Look at the pages that now rank above you. Are they more specific? Do they cover the topic in more depth? Do they use clearer examples, fresher facts, or a stronger structure? Those differences usually tell you what Google prefers now.

Google says core updates are about helping users find more helpful content, not about punishing one page in isolation. Its core updates guidance recommends reviewing whether your content is helpful, reliable, and people-first. That is the right frame for recovery.

A drop after a core update often points to one of these patterns:

- The page is useful, but not as complete as competing pages.

- The page answers the question, but not as clearly.

- The page feels dated or too generic.

- The page lacks signs of trust, such as examples, sources, or firsthand detail.

A ranking loss in this case is a comparison problem. Google found pages that do the job better, so your page lost ground.

Refresh thin or outdated content first

Thin pages are easy for Google to overlook. If a page was weak before the update, small edits may not be enough. You may need to rebuild the page so it answers the topic with real depth.

Start with the parts readers notice first. Update stale stats, replace old examples, and add sections the current results already cover. If the page is missing key steps, common mistakes, or a short comparison, fix that gap before you worry about titles or meta descriptions.

Use this order when you refresh content:

- Replace outdated facts and screenshots.

- Add missing sections that users expect.

- Improve examples so the advice feels usable.

- Expand weak paragraphs with clearer detail.

- Tighten the structure so the page is easy to scan.

A thin page can sometimes recover with targeted improvements. However, if the page never had enough substance, a light refresh usually falls flat. In that case, the page needs more than a polish, it needs a better answer.

Fix pages that do not match search intent anymore

A page can lose traffic even when the writing is clean. If it answers the wrong question, sounds too salesy, or tries to cover too much at once, Google may rank it lower than pages that match the search better.

This happens when the intent shifts. A query that once favored broad advice may now favor step-by-step help, product comparisons, or local details. If you keep serving the old angle, the page starts missing the mark.

Compare your page with what ranks now. Ask simple questions:

- Does the page answer the same intent as the top results?

- Is it trying to sell when the search wants information?

- Is it too broad for a query that needs a narrow answer?

- Does the page bury the main answer under extra text?

When the top results are mostly how-to guides, a landing page full of brand language will struggle. When the top results are product comparisons, a vague overview will fall behind. The fix is to reshape the page around the real query, not the version you wish people were searching for.

Sometimes the problem is one section, not the whole page. In that case, rewrite the intro, tighten the heading structure, and move the answer higher on the page. Small changes help only when the page is already close to what users want.

Remove duplication and keyword cannibalization

Duplicate pages can split your own traffic. If several URLs target the same topic, they compete with each other and weaken each page’s chance to rank. Search engines then have to choose among similar pages, and that often lowers the whole group.

This issue shows up a lot on sites that publish many near-identical posts or service pages. One page ranks for a while, then another similar page starts pulling the same queries. The result is messy ranking data and unstable traffic.

The fix depends on the situation:

- Merge similar pages when they cover the same topic and one page is clearly stronger.

- Improve internal linking so the best page gets the clearest signals.

- Change the page target when two pages should serve different searches.

- Use canonical tags carefully when duplicate versions exist for technical reasons.

If two pages answer the same query, Google often treats them as a choice problem.

This is one of the most common content issues behind sudden traffic loss. Once you remove overlap, the strongest page has a much better chance to hold the ranking and earn clicks again.

Check manual actions, security issues, and spam signals

A sudden traffic drop can happen when Google stops trusting part of a site. That trust loss may come from a manual action, a security problem, or spam patterns that look manipulative.

This check matters because these issues can hit hard and fast. If Google sees hacked pages, malware, sneaky redirects, or link spam, rankings can fall before the rest of the site looks broken. Start here, fix what you can confirm, and document every change you make.

Review the Manual Actions report first

Open Google Search Console, then go to Security & Manual Actions and select Manual actions. If Google has taken a manual action against your site, it means a person reviewed it and found a rule violation. That can cover spam, unnatural links, thin content, sneaky redirects, or other tactics that try to manipulate search results.

The report tells you what type of issue Google found and which pages or site sections are affected. Read that message carefully, because the fix depends on the exact problem. If the notice says unnatural links, the cleanup is different from a hacked-content issue or a pure spam violation.

Follow the issue details line by line, and keep a written record of every fix. Note the URLs you changed, what you removed, what you redirected, and when you finished. If you later file a reconsideration request, that paper trail helps you explain the cleanup clearly.

Google’s Manual actions report guidance explains that these actions are applied to pages or entire sites that break search rules. Once you know the exact reason, you can work through it with less guesswork.

A simple cleanup log can help:

- Affected URL or section: list every page tied to the issue.

- Problem found: note the exact manual action type.

- Fix applied: describe the change in plain language.

- Date completed: record when the page was cleaned up.

- Follow-up step: keep track of any request for review.

If the report names a problem, fix that problem exactly. Broad edits rarely help when Google has already identified the pattern.

Look for hacked pages or security warnings

Next, open the Security issues report. If Google thinks your site was hacked, this report usually shows signs of injected pages, spammy redirects, hidden text, or malware. These problems can show up in search results as warning labels, and they can scare users away before they even click.

Hacked sites often leave small clues. You may see strange URLs you never created, pages stuffed with casino or pills content, or text hidden in white on a white background. Sometimes the homepage looks normal, but deeper pages have been altered to push spam links or fake offers.

Watch for these warning signs:

- Unexpected pages that target odd keywords or foreign-language terms.

- Spam links added to content, footers, or sidebars.

- Hidden text that users cannot see but search engines can still read.

- Suspicious redirects that send visitors to unrelated sites.

- Login pages or files that you did not place on the server.

These problems damage trust because they make your site look unsafe. They also interfere with indexing, since Google may stop showing affected pages or label them as dangerous. For a clear explanation of what Google treats as a site security problem, see the Security issues report help page.

If the site was compromised, clean the infection at the source. Remove malicious files, reset passwords, update plugins and themes, and review user accounts for anything unfamiliar. After that, request a fresh crawl so Google can see the cleaned version.

Audit bad links and obvious spam patterns

Once security is clear, look at link patterns and spam signals. A site can lose traffic when its profile looks unnatural, low quality, or overly manipulative. That includes bought links, large bursts of low-grade guest posts, repeated anchor text, and pages built mainly to pass authority around.

You do not need to overcomplicate this check. Start with the obvious stuff first, because that is often where the damage is hiding. If your site picked up a wave of irrelevant links, or if a single keyword is used over and over in anchor text, the pattern may look artificial to Google.

Focus on cleanup before advanced tactics:

- Remove or nofollow links you control that are clearly spammy.

- Delete thin pages that only exist to place links.

- Merge or rewrite pages that repeat the same topic with little value.

- Fix comment spam, forum spam, and user-generated junk.

- Review partner pages, directory listings, and sitewide links for overuse.

A natural profile usually looks messy in places. A manipulated one looks too neat, too repetitive, or too eager to push one term. That difference matters. If Google sees a strong spam pattern, rankings can fall across the site, not just on the pages tied to the bad links.

The same review applies to on-site spam signals. Thin affiliate pages, scraped content, doorway pages, and pages packed with repetitive phrases can all drag trust down. If you find those problems, clean them before you move on to more technical fixes. A manual action or spam-related drop often will not recover until the site looks safe and honest again.

When you finish this pass, you should know three things: whether Google flagged the site directly, whether the site was hacked, and whether spam patterns are part of the problem. That gives you a much better path than guessing, and it keeps the recovery work focused on the issues that can actually bring traffic back.

Use search result changes to understand why clicks fell even when rankings look stable

A drop in clicks does not always mean your pages lost rank. Sometimes the search page changed around you, and your result got pushed lower on the screen even though the average position looks steady. That is why this check belongs in the middle of any review of Google Search Console fixes for sudden traffic loss.

Look at the search results page as the real battleground. If Google adds more ads, rich results, or answer boxes above your listing, your page can lose clicks without showing a dramatic ranking drop. The search position may look fine on paper, but the page is getting less attention in practice.

Watch for changes in impressions, clicks, and CTR

Start with the three numbers that tell the real story: impressions, clicks, and CTR. If impressions stay flat while clicks fall, your page is still showing for the same searches, but fewer people choose it. If CTR drops while average position looks stable, the result page is likely taking attention away from your listing.

That gap often points to stronger competition on the results page, not a ranking problem. A page can hold the same spot and still lose ground if the title, snippet, or surrounding search features now pull users elsewhere. Google’s own traffic drop guidance calls out cases where users see your result but click a different one.

A quick comparison helps:

- Stable impressions, lower clicks often means the page still appears, but it earns less attention.

- Stable rankings, lower CTR can mean the snippet is weaker than before.

- Clicks down with impressions up may point to a new query mix, not a page problem.

- Both clicks and impressions down suggests real visibility loss, which needs a deeper check.

Use the query view and page view together. That split shows whether one keyword group is losing appeal or whether the entire page is getting fewer taps. If the decline is limited to a few queries, the issue may be a SERP layout change on those searches.

Check if new search features are taking space above your result

Now look at the search results themselves. Google often fills the top of the page with ads, featured snippets, AI Overviews, shopping blocks, videos, and People Also Ask boxes. Those features can push organic results lower, so users see them first and skip past your link.

That matters because the ranking number alone does not show how much screen space your result actually gets. A page that still ranks in the top five may sit below two ads, a featured snippet, and an AI answer. At that point, the result is visible in theory, but easy to miss in practice.

This is especially common on informational queries. Users often get enough of the answer from the SERP itself, so they never scroll. For a plain explanation of how Google says clicks can fall even when impressions stay strong, see Google’s traffic drop help page.

Ask yourself what changed above your listing:

- More ads can push organic results lower on the page.

- Featured snippets may answer the query before users reach your site.

- AI Overviews can reduce clicks by summarizing the topic up top.

- Shopping or video blocks can pull attention away from text results.

A stable rank is only part of the picture. If the page gets squeezed down the screen, clicks can fall fast.

This is where visibility loss and reporting noise start to separate. If your rank looks stable but the page now lives under heavier SERP features, the traffic drop is real. If the search page looks the same but clicks still fall, then the problem may sit in your title, snippet, or query mix.

Look for seasonality or a reporting mismatch before you panic

Before you treat the drop as a crisis, check whether demand changed first. Some traffic losses follow holidays, weekends, promotions, or the normal rise and fall of search interest. A page can look weaker in Search Console simply because fewer people searched for the topic during that period.

Date comparison errors can also distort the picture. If you compare a busy week with a slow one, the decline looks bigger than it really is. That is why it helps to compare the same season, the same day range, and the same business cycle when you can.

GA4 is useful here because it shows whether the drop appears outside Search Console too. If Search Console clicks fall but GA4 sessions stay steady, the issue may be reporting noise, a query mix shift, or a SERP change. If both tools drop together, the traffic loss is more likely real.

A few checks keep the analysis clean:

- Compare the current period with the same period last year when seasonality matters.

- Review holidays, sales, launches, and site changes that could affect demand.

- Check GA4 landing page traffic to confirm whether user visits also fell.

- Compare branded and non-branded traffic so one weak segment does not skew the whole picture.

The goal is simple, separate true visibility loss from a normal dip in interest or a reporting mismatch. Once you do that, the next fix is easier to choose, because you know whether you are fighting the search page, the demand curve, or the data itself.

Turn the diagnosis into a recovery plan you can actually follow

You have spotted the problem. Now list your fixes in order of payoff. Quick technical changes often bring traffic back first. Content tweaks take more time but build longer results. This approach keeps you moving without overwhelm.

Prioritize fixes by impact and effort

Start with high-impact, low-effort changes. Indexing blocks, bad redirects, noindex tags, and broken pages top the list. These stop Google from showing your content at all. Fix them, and pages can reappear fast.

Content issues come next. They need deeper work, so recovery lags. A core update hit or intent mismatch means rewrite time. Still, address them after technical wins to avoid split focus.

Follow this simple order:

- Clear crawl blocks like robots.txt errors or server issues.

- Remove noindex tags and fix redirects on key pages.

- Repair broken links and 404s that hit top traffic sources.

- Refresh thin content or duplicates last.

For a prioritization guide on coverage errors, check this coverage errors fix order. It matches impact to pages affected. Tackle one category at a time. That way, you see progress weekly.

Request indexing, resubmit sitemaps, and monitor key pages

Finish a fix, then tell Google. Use the URL Inspection tool to request indexing on changed pages. Resubmit your sitemap too. Google processes these faster on active sites.

Don’t expect overnight jumps. Updated pages on established sites recrawl in 1-7 days. Request indexing cuts that to hours or days for most. Bigger sites or new ones take 2-4 weeks.

Watch these over the next days and weeks:

- Coverage report: Track indexed pages rising.

- Performance data: Check clicks and impressions on fixed URLs.

- URL Inspection: Confirm live tests pass.

If no change after two weeks, revisit blocks. Patience pays, because Google crawls based on site signals.

Set up a simple weekly tracking routine

Recovery needs steady checks. Set a calendar reminder for Sundays. Open Search Console and review three spots until traffic holds steady.

Keep it practical:

- Top pages: Sort by click loss. Note if they climb.

- Top queries: Filter non-branded terms. Watch positions and CTR.

- Coverage issues: Scan for new errors or exclusions.

Log changes in a sheet: date, metric, fix applied. After four weeks, most technical recoveries show. Content fixes may stretch to months.

These Google Search Console fixes for sudden traffic loss work when you stick to the plan. Pick your top issue today. Fix it, request the recrawl, and track next week. Traffic builds back one step at a time.

Conclusion

Most sudden traffic losses trace back through Search Console when you check the data in the right order. Start with performance trends and scope, then move to technical blocks, content gaps, manual actions, and SERP shifts.

That sequence turns guesswork into targeted Google Search Console fixes for sudden traffic loss. You spot the root cause fast, whether it’s a noindex tag or outdated intent.

Recovery works when you find the issue early and fix it with care. Traffic returns as Google recrawls and re-ranks your pages.

Save the pin for later

- How to Prune Content for SEO Growth - May 14, 2026

- Google Search Console Fixes for Sudden Traffic Loss - May 14, 2026

- Best Keyword Research Tools for Bloggers in 2026 That Work - May 13, 2026